Archive for the ‘Azure’ Category

Introduction to Day 2 Serverless Operations – Part 2

In part 1 of this series, I introduced some of the most common Day 2 serverless operations, focusing on Function as a Service.

In this part, I will focus on serverless application integration services commonly used in event-driven architectures.

For this post, I will look into message queue services, event routing services, and workflow orchestration services for building event-driven architectures.

Message queue services

Message queues enable asynchronous communication between different components in an event-driven architecture (EDA). This means that producers (systems or services generating events) can send messages to the queue and continue their operations without waiting for consumers (systems or services processing events) to respond or be available.

Security and Access Control

Security should always be the priority, as it protects your data, controls access, and ensures compliance from the outset. This includes data protection, limiting permissions, and enforcing least privilege policies.

- When using Amazon SQS, manage permissions using AWS IAM policies to restrict access to queues and follow the principle of least privilege, as explained here:

- When using Amazon SQS, enable server-side encryption (SSE) for sensitive data at rest, as explained here:

- When using Amazon SNS, manage topic policies and IAM roles to control who can publish or subscribe, as explained here:

https://docs.aws.amazon.com/sns/latest/dg/security_iam_id-based-policy-examples.html

- When using Amazon SNS, enable server-side encryption (SSE) for sensitive data at rest, as explained here:

https://docs.aws.amazon.com/sns/latest/dg/sns-server-side-encryption.html

- When using Azure Service Bus, use managed identities and configure roles, following the principle of least privileged, as explained here:

https://learn.microsoft.com/en-us/azure/service-bus-messaging/service-bus-managed-service-identity

- When using Azure Service Bus, enable encryption at rest using customer-managed keys, as explained here:

https://learn.microsoft.com/en-us/azure/service-bus-messaging/configure-customer-managed-key

- When using Google Cloud Pub/Sub, tighten and review IAM policies to ensure only authorized users and services can publish or subscribe to topics, as explained here:

https://cloud.google.com/pubsub/docs/access-control

- When using Google Cloud Pub/Sub, configure encryption at rest using customer-managed encryption keys, as explained here:

https://cloud.google.com/pubsub/docs/encryption

Monitoring and Observability

Once security is in place, implement comprehensive monitoring and observability to gain visibility into system health, performance, and failures. This enables proactive detection and response to issues.

- When using Amazon SQS, monitor queue metrics such as message count, age of oldest message, and queue length using Amazon CloudWatch, as explained here:

- When using Amazon SQS, set up CloudWatch alarms for thresholds (e.g., high message backlog or processing latency), as explained here:

- When using Amazon SNS, use CloudWatch to track message delivery status, failure rates, and subscription metrics, as explained here:

https://docs.aws.amazon.com/sns/latest/dg/sns-monitoring-using-cloudwatch.html

- When using Azure Service Bus, use Azure Monitor to track metrics such as queue length, message count, dead-letter messages, and throughput. Set up alerts for abnormal conditions (e.g., message backlog, high latency), as explained here:

https://learn.microsoft.com/en-us/azure/service-bus-messaging/monitor-service-bus

- When using Azure Service Bus, monitor and manage message sessions for ordered processing when required, as explained here:

https://learn.microsoft.com/en-us/azure/service-bus-messaging/message-sequencing

- When using Google Cloud Pub/Sub, monitor message throughput, error rates, and latency, and set up alerts for operational anomalies, as explained here:

https://cloud.google.com/pubsub/docs/monitoring

Error Handling

With monitoring established, set up robust error handling mechanisms, including alerts, retries, and dead-letter queues, to ensure reliability and rapid remediation of failures.

- When using Amazon SQS, configure Dead Letter Queues (DLQs) to capture messages that fail processing repeatedly for later analysis and remediation, as explained here:

- When using Amazon SNS, integrate with DLQs (using SQS as a DLQ) for messages that cannot be delivered to endpoints, as explained here:

https://docs.aws.amazon.com/sns/latest/dg/sns-dead-letter-queues.html

- When using Azure Service Bus, regularly review and process messages in dead-letter queues to ensure failed messages are not ignored, as explained here:

https://learn.microsoft.com/en-us/azure/service-bus-messaging/service-bus-dead-letter-queues

https://learn.microsoft.com/en-us/azure/service-bus-messaging/enable-dead-letter

- When using Google Cloud Pub/Sub, monitor for undelivered or unacknowledged messages and set up dead-letter topics if needed, as explained here:

https://cloud.google.com/pubsub/docs/handling-failures

Scaling and Performance

After ensuring security, visibility, and error resilience, focus on scaling and performance. Monitor throughput, latency, and resource utilization, and configure auto-scaling to match demand efficiently.

- When using Amazon SQS, adjust queue settings or consumer concurrency as traffic patterns change, as explained here:

- When using Amazon SQS, monitor usage for unexpected spikes, as explained here:

- When using Azure Service Bus, adjust throughput units, use partitioned queues/topics, and implement batching or parallel processing to handle varying loads, as explained here:

https://learn.microsoft.com/en-us/azure/service-bus-messaging/service-bus-performance-improvements

- When using Google Cloud Pub/Sub, adjust quotas and scaling policies as message volumes change to avoid service interruptions, as explained here:

https://cloud.google.com/pubsub/quotas

Maintenance

Finally, establish ongoing maintenance routines such as regular reviews, updates, cost optimization, and compliance audits to sustain operational excellence and adapt to evolving needs.

- When using Amazon SQS, purge queues as needed and archive messages if required for compliance, as explained here:

- When using Amazon SNS, review and clean up unused topics and subscriptions, as explained here:

https://docs.aws.amazon.com/sns/latest/dg/sns-delete-subscription-topic.html

- When using Azure Service Bus, delete unused messages, as explained here:

https://learn.microsoft.com/en-us/azure/service-bus-messaging/batch-delete

- When using Google Cloud Pub/Sub, delete unused messages, as explained here:

https://cloud.google.com/pubsub/docs/replay-overview

Event routing services

Event routing services act as the central hub in event-driven architectures, receiving events from producers and distributing them to the appropriate consumers. This decouples producers from consumers, allowing each to operate, scale, and fail independently without direct awareness of each other.

Monitoring and Observability

Serverless event routing services require robust monitoring and observability to track event flows, detect anomalies, and ensure system health; this is typically achieved through metrics, logs, and dashboards that provide real-time visibility into event processing and failures.

- When using Amazon EventBridge, set up CloudWatch metrics and logs to monitor event throughput, failures, latency, and rule matches. Use CloudWatch Alarms to alert on anomalies or failures in event delivery, as explained here:

https://docs.aws.amazon.com/eventbridge/latest/userguide/eb-monitoring.html

- When using Azure Event Grid, use Azure Monitor and Event Grid metrics to track event delivery, failures, and latency, as explained here:

https://learn.microsoft.com/en-us/azure/event-grid/monitor-namespaces

- When using Azure Event Grid, set up alerts for undelivered events or high failure rates, as explained here:

https://learn.microsoft.com/en-us/azure/event-grid/set-alerts

- When using Google Eventarc, monitor for event delivery status, trigger activity, and errors, as explained here:

https://cloud.google.com/eventarc/standard/docs/monitor

Error Handling and Dead-Letter Management

Effective error handling uses mechanisms like retries and circuit breakers to manage transient failures, while dead-letter queues (DLQs) capture undelivered or failed events for later analysis and remediation, preventing data loss and supporting troubleshooting.

- When using Amazon EventBridge, configure dead-letter queues (DLQ) for failed event deliveries. Set retry policies and monitor DLQ for undelivered events to ensure no data loss, as explained here:

https://docs.aws.amazon.com/eventbridge/latest/userguide/eb-rule-dlq.html

- When using Azure Event Grid, Configure retry policies and use dead-lettering for events that cannot be delivered after multiple attempts, as explained here:

https://learn.microsoft.com/en-us/azure/event-grid/manage-event-delivery

- When using Google Eventarc, use Pub/Sub dead letter topics for failed event deliveries, as explained here:

https://cloud.google.com/eventarc/docs/retry-events

Security and Access Management

Security and access management involve configuring fine-grained permissions to control which users and services can publish, consume, or manage events, ensuring that only authorized entities interact with event routing resources and that sensitive data remains protected.

- When using Amazon EventBridge, review and update IAM policies for event buses, rules, and targets. Use resource-based policies to restrict who can publish or subscribe to events, as explained here:

https://docs.aws.amazon.com/eventbridge/latest/userguide/eb-manage-iam-access.html

https://docs.aws.amazon.com/eventbridge/latest/userguide/eb-use-resource-based.html

- When using Azure Event Grid, use managed identity to an Event Grid topic and configure a role, following the principle of least privilege, as explained here:

https://learn.microsoft.com/en-us/azure/event-grid/enable-identity-custom-topics-domains

https://learn.microsoft.com/en-us/azure/event-grid/add-identity-roles

- When using Google Eventarc, manage IAM permissions for triggers, event sources, and destinations, following the principle of least privilege, as explained here:

https://cloud.google.com/eventarc/standard/docs/access-control

- When using Google Eventarc, encrypt sensitive data at rest using customer-managed encryption keys, as explained here:

https://cloud.google.com/eventarc/docs/use-cmek

Scaling and Performance

Serverless platforms automatically scale event routing services in response to workload changes, spinning up additional resources during spikes and scaling down during lulls, while performance optimization involves tuning event patterns, batching, and concurrency settings to minimize latency and maximize throughput.

- When using Amazon EventBridge, monitor event throughput and adjust quotas or request service limit increases as needed. Optimize event patterns and rules for efficiency, as explained here:

https://docs.aws.amazon.com/eventbridge/latest/userguide/eb-quota.html

- When using Azure Event Grid, monitor for throttling or delivery issues, as explained here:

https://learn.microsoft.com/en-us/azure/event-grid/monitor-push-reference

- When using Google Eventarc, monitor quotas and usage (e.g., triggers per location), as explained here:

https://cloud.google.com/eventarc/docs/quotas

Workflow orchestration services

Workflow services are designed to coordinate and manage complex sequences of tasks or business processes that involve multiple steps and services. They act as orchestrators, ensuring each step in a process is executed in the correct order, handling transitions, and managing dependencies between steps.

Monitoring and Observability

Set up and review monitoring dashboards, logs, and alerts to ensure workflows are running correctly and to quickly detect anomalies or failures.

- When using AWS Step Functions, monitor executions, check logs, and set up CloudWatch metrics and alarms to ensure workflows run as expected, as explained here:

https://docs.aws.amazon.com/step-functions/latest/dg/monitoring-logging.html

- When using Azure Logic Apps, use Azure Monitor and built-in diagnostics to track workflow runs and troubleshoot failures, as explained here:

https://learn.microsoft.com/en-us/azure/logic-apps/monitor-logic-apps-overview

- When using Google Workflows, use Cloud Logging and Monitoring to observe workflow executions and set up alerts for failures or anomalies, as explained here:

https://cloud.google.com/workflows/docs/monitor

Error Handling and Retry

Investigate failed workflow executions, enhance error handling logic (such as retries and catch blocks), and resubmit failed runs where appropriate. This is crucial for maintaining workflow reliability and minimizing manual intervention.

- When using AWS Step Functions, review failed executions, configure retry/catch logic, and update workflows to handle errors gracefully, as explained here:

https://docs.aws.amazon.com/step-functions/latest/dg/concepts-error-handling.html

- When using Azure Logic Apps, handle failed runs, configure error actions, and resubmit failed instances as needed, as explained here:

https://learn.microsoft.com/en-us/azure/logic-apps/error-exception-handling

- When using Google Workflows, inspect failed executions, define retry policies, and update error handling logic in workflow definitions, as explained here:

https://cloud.google.com/workflows/docs/reference/syntax/catching-errors

Security and Access Management

Workflow orchestration services require continuous enforcement of granular access controls and the principle of least privilege, ensuring that each function and workflow has only the permissions necessary for its specific tasks.

- When using AWS Step Functions, use AWS Identity and Access Management (IAM) for fine-grained control over who can access and manage workflows, as explained here:

https://docs.aws.amazon.com/step-functions/latest/dg/auth-and-access-control-sfn.html

- When using Azure Logic Apps, use Azure Role-Based Access Control (RBAC) and managed identities for secure access to resources and connectors, as explained here:

https://learn.microsoft.com/en-us/azure/logic-apps/authenticate-with-managed-identity

- When using Google Workflows, use Google Cloud IAM for permissions and access management, which allows you to define who can execute, view, or manage workflows, as explained here:

https://cloud.google.com/workflows/docs/use-iam-for-access

Versioning and Updates

Workflow orchestration services use versioning to track and manage different iterations of workflows or services, allowing multiple versions to coexist and enabling users to select, test, or revert to specific versions as needed.

- When using AWS Step Functions, update state machines, manage versions, and test changes before deploying to production, as explained here:

https://docs.aws.amazon.com/step-functions/latest/dg/concepts-state-machine-version.html

- When using Azure Logic Apps, manage deployment slots, and use versioning for rollback if needed, as explained here:

https://learn.microsoft.com/en-us/azure/logic-apps/manage-logic-apps-with-azure-portal

- When using Google Workflows, update workflows, test changes in staging, and deploy updates with minimal disruption, as explained here:

https://cloud.google.com/workflows/docs/best-practice

Cost Optimization

Regularly review usage and billing data, optimize workflow design (e.g., reduce unnecessary steps or external calls), and adjust resource allocation to control operational costs.

- When using AWS Step Functions, analyze usage and optimize workflow design to reduce execution and resource costs, as explained here:

https://docs.aws.amazon.com/step-functions/latest/dg/sfn-best-practices.html#cost-opt-exp-workflows

- When using Azure Logic Apps, monitor consumption, review billing, and optimize triggers/actions to control costs, as explained here:

https://learn.microsoft.com/en-us/azure/logic-apps/plan-manage-costs

- When using Google Workflows, analyze workflow usage, optimize steps, and monitor billing to reduce costs, as explained here:

https://cloud.google.com/workflows/docs/best-practice#optimize-usage

Summary

In this blog post, I presented the most common Day 2 serverless operations when using application integration services (message queues, event routing services, and workflow orchestrations) to build modern applications.

I looked at aspects such as observability, error handling, security, performance, etc.

Building event-driven architectures requires time to grasp which services best support this approach. However, gaining a foundational understanding of key areas is essential for effective day 2 serverless operations.

About the author

Eyal Estrin is a cloud and information security architect, an AWS Community Builder, and the author of the books Cloud Security Handbook and Security for Cloud Native Applications, with more than 25 years in the IT industry.

You can connect with him on social media (https://linktr.ee/eyalestrin).

Opinions are his own and not the views of his employer.

Kubernetes Troubleshooting in the Cloud

Kubernetes has been used by organizations for nearly a decade — from wrapping applications inside containers, pushing them to a container repository, to full production deployment.

At some point, we need to troubleshoot various issues in Kubernetes environments.

In this blog post, I will review some of the common ways to troubleshoot Kubernetes, based on the hyperscale cloud environments.

Common Kubernetes issues

Before we deep dive into Kubernetes troubleshooting, let us review some of the common Kubernetes errors:

- CrashLoopBackOff — A container in a pod keeps failing to start, so Kubernetes tries to restart it over and over, waiting longer each time. This usually means there’s a problem with the app, something important is missing, or the setup is wrong.

- ImagePullBackOff — Kubernetes can’t download the container image for a pod. This might happen if the image name or tag is wrong, there’s a problem logging into the image registry, or there are network issues.

- CreateContainerConfigError — Kubernetes can’t set up the container because there’s a problem with the settings like wrong environment variables, incorrect volume mounts, or security settings that don’t work.

- PodInitializing — A pod is stuck starting up, usually because the initial setup containers are failing, taking too long, or there are problems with the network or attached storage.

Kubectl for Kubernetes troubleshooting

Kubectl is the native and recommended way to manage Kubernetes, and among others to assist in troubleshooting various aspects of Kubernetes.

Below are some examples of using kubectl:

- View all pods and their statuses:

kubectl get pods

- Get detailed information and recent events for a specific pod:

kubectl describe pod <pod-name>

- View logs from a specific container in a multi-container pod:

kubectl logs <pod-name> -c <container-name>

- Open an interactive shell inside a running pod:

kubectl exec -it <pod-name> — /bin/bash

- Check the status of cluster nodes:

kubectl get nodes

- Get detailed information about a specific node:

kubectl describe node <node-name>

Additional information about kubectl can be found at:

https://kubernetes.io/docs/reference/kubectl

Remote connectivity to Kubernetes nodes

In rare cases, you may need to remotely connect a Kubernetes node as part of troubleshooting. Some of the reasons to do so may be troubleshooting hardware failures, collecting system-level logs, cleaning up disk space, restarting services, etc.

Below are secure ways to remotely connect to Kubernetes nodes:

- To connect to an Amazon EKS node using AWS Systems Manager Session Manager from the command line, use the following command:

aws ssm start-session — target <instance-id>

For more details, see: https://docs.aws.amazon.com/eks/latest/best-practices/protecting-the-infrastructure.html

- To connect to an Azure AKS node using Azure Bastion from the command line, run the commands below to get the private IP address of the AKS node and SSH from a bastion connected environment:

az aks machine list — resource-group <myResourceGroup> — cluster-name <myAKSCluster> \

— nodepool-name <nodepool1> -o table

ssh -i /path/to/private_key.pem azureuser@<node-private-ip>

For more details, see: https://learn.microsoft.com/en-us/azure/aks/node-access

- To connect to a GKE node using the gcloud command combined with Identity-Aware Proxy (IAP), use the following command:

gcloud compute ssh <GKE_NODE_NAME> — zone <ZONE> — tunnel-through-iap

For more details, see: https://cloud.google.com/compute/docs/connect/ssh-using-iap#gcloud

Monitoring and observability

To assist in troubleshooting, Kubernetes has various logs, some are enabled by default (such as container logs) and some need to be explicitly enabled (such as Control Plane logs).

Below are some of the ways to collect logs in managed Kubernetes services.

Amazon EKS

To collect EKS node and application logs, use CloudWatch Container Insights (including resource utilization), as explained below:

To collect EKS Control Plane logs to CloudWatch logs, follow the instructions below:

https://docs.aws.amazon.com/eks/latest/userguide/control-plane-logs.html

To collect metrics from the EKS cluster, use Amazon Managed Service for Prometheus, as explained below:

https://docs.aws.amazon.com/eks/latest/userguide/prometheus.html

Azure AKS

To collect AKS node and application logs (including resource utilization), use Azure Monitor Container Insights, as explained below:

To collect AKS Control Plane logs to Azure Monitor, configure the diagnostic setting, as explained below:

https://docs.azure.cn/en-us/aks/monitor-aks?tabs=cilium#aks-control-planeresource-logs

To collect metrics from the AKS cluster, use Azure Monitor managed service for Prometheus, as explained below:

Google GKE

GKE node and Pod logs are sent automatically to Google Cloud Logging, as documented below:

https://cloud.google.com/kubernetes-engine/docs/concepts/about-logs#collecting_logs

GKE Control Plane logs are not enabled by default, as documented below:

https://cloud.google.com/kubernetes-engine/docs/how-to/view-logs

To collect metrics from the GKE cluster, use Google Cloud Managed Service for Prometheus, as explained below:

https://cloud.google.com/stackdriver/docs/managed-prometheus/setup-managed

Enable GKE usage metering to collect resource utilization, as documented below:

https://cloud.google.com/kubernetes-engine/docs/how-to/cluster-usage-metering#enabling

Troubleshooting network connectivity issues

There may be various network-related issues when managing Kubernetes clusters. Some of the common network issues are nodes or pods not joining the cluster, inter-pod communication failures, connectivity to the Kubernetes API server, etc.

Below are some of the ways to troubleshoot network issues in managed Kubernetes services.

Amazon EKS

Enable network policy logs to investigate network connection through Amazon VPC CNI, as explained below:

https://docs.aws.amazon.com/eks/latest/userguide/network-policies-troubleshooting.html

Use the guide below for monitoring network performance issues in EKS:

Temporary enable VPC flow logs (due to high storage cost in large production environments) to query network traffic of EKS clusters deployed in a dedicated subnet, as explained below:

Azure AKS

Use the guide below to troubleshoot connectivity issues to the AKS API server:

Use the guide below to troubleshoot outbound connectivity issues from the AKS cluster:

Use the guide below to troubleshoot connectivity issues to applications deployed on top of AKS:

Use Container Network Observability to troubleshoot connectivity issues within an AKS cluster, as explained below:

https://learn.microsoft.com/en-us/azure/aks/container-network-observability-concepts

Google GKE

Use the guide below to troubleshoot network connectivity issues in GKE:

https://cloud.google.com/kubernetes-engine/docs/troubleshooting/connectivity-issues-in-cluster

Temporary enable VPC flow logs (due to high storage cost in large production environments) to query network traffic of GKR clusters deployed in a dedicated subnet, as explained below:

https://cloud.google.com/vpc/docs/using-flow-logs

Summary

I am sure there are many more topics to cover when troubleshooting problems with Kubernetes clusters, however, in this blog post, I highlighted the most common cases.

Kubernetes by itself is an entire domain of expertise and requires many hours to deep dive into and understand.

I strongly encourage anyone using Kubernetes to read vendors’ documentation, and practice in development environments, until you gain hands-on experience running production environments.

About the author

Eyal Estrin is a cloud and information security architect, an AWS Community Builder, and the author of the books Cloud Security Handbook and Security for Cloud Native Applications, with more than 20 years in the IT industry.

You can connect with him on social media (https://linktr.ee/eyalestrin).

Opinions are his own and not the views of his employer.

Kubernetes and Container Portability: Navigating Multi-Cloud Flexibility

For many years we have been told that one of the major advantages of using containers is portability, meaning, the ability to move our application between different platforms or even different cloud providers.

In this blog post, we will review some of the aspects of designing an architecture based on Kubernetes, allowing application portability between different cloud providers.

Before we begin the conversation, we need to recall that each cloud provider has its opinionated way of deploying and consuming services, with different APIs and different service capabilities.

There are multiple ways to design a workload, from traditional use of VMs to event-driven architectures using message queues and Function-as-a-Service.

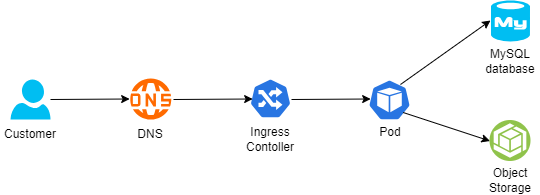

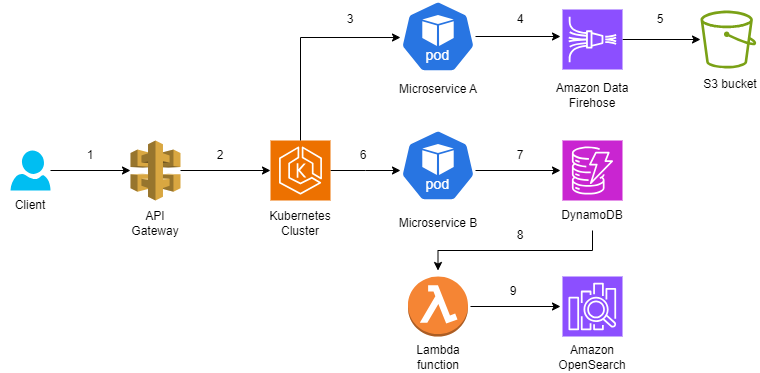

Below is an example of architecture:

- A customer connecting from the public Internet, resolving the DNS name from a global DNS service (such as Cloudflare)

- The request is sent to an Ingress controller, who forwards the traffic to a Kubernetes Pod exposing an application

- For static content retrieval, the application is using an object storage service through a Kubernetes CSI driver

- For persistent data storage, the application uses a backend-managed MySQL database

The 12-factor app methodology angle

When talking about the portability and design of modern applications, I always like to look back at the 12-factor app methodology:

Config

To be able to deploy immutable infrastructure on different cloud providers, we should store variables (such as SDLC stage, i.e., Dev, Test, Prod) and credentials (such as API keys, passwords, etc.) outside the containers.

Some examples:

- AWS Systems Manager Parameter Store (for environment variables or static credentials) or AWS Secrets Manager (for static credentials)

- Azure App Configuration (for environment variables or configuration settings) or Azure Key Vault (for static credentials)

- Google Config Controller (for configuration settings), Google Secret Manager (for static credentials), or GKE Workload Identity (for access to Cloud SQL or Google Cloud Storage)

- HashiCorp Vault (for environment variables or static credentials)

Backing services

To truly design for application portability between cloud providers, we need to architect our workload where a Pod can be attached and re-attached to a backend service.

For storage services, it is recommended to prefer services that Pods can connect via CSI drivers.

Some examples:

- Amazon S3 (for object storage), Amazon EBS (for block storage), or Amazon EFS (for NFS storage)

- Azure Blob (for object storage), Azure Disk (for block storage), or Azure Files (for NFS or CIFS storage)

- Google Cloud Storage (for object storage), Compute Engine Persistent Disks (for block storage), or Google Filestore (for NFS storage)

For database services, it is recommended to prefer open-source engines deployed in a managed service, instead of cloud opinionated databases.

Some examples:

- Amazon RDS for MySQL (for managed MySQL) or Amazon RDS for PostgreSQL (for managed PostgreSQL)

- Azure Database for MySQL (for managed MySQL) or Azure Database for PostgreSQL (for managed PostgreSQL)

- Google Cloud SQL for MySQL (for managed MySQL) or Google Cloud SQL for PostgreSQL (for managed PostgreSQL)

Port binding

To allow customers access to our application, Kubernetes exports a URL (i.e., DNS name) and a network port.

Since load-balancers offered by the cloud providers are opinionated to their eco-systems, we should use an open-source or third-party solution that will allow us to replace the ingress controller and enforce network policies (for example to be able to enforce both inbound and pod-to-pod communication), or a service mesh solution (for mTLS and full layer 7 traffic inspection and enforcement).

Some examples:

- NGINX (an open-source ingress controller), supported by Amazon EKS, Azure AKS, or Google GKE

- Calico CNI plugin (for enforcing network policies), supported by Amazon EKS, Azure AKS, or Google GKE

- Cilium CNI plugin (for enforcing network policies), supported by Amazon EKS, Azure AKS, or Google GKE

- Istio (for service mesh capabilities), supported by Amazon EKS, Azure AKS, or Google GKE

Logs

Although not directly related to container portability, logs are an essential part of application and infrastructure maintenance — to be specific observability, i.e., the ability to collect logs, metrics, and traces), to be able to anticipate issues before they impact customers.

Although each cloud provider has its own monitoring and observability services, it is recommended to consider open-source solutions, supported by all cloud providers, and stream logs to a central service, to be able to continue monitoring the workloads, regardless of the cloud platform.

Some examples:

- Prometheus — monitoring and alerting solution

- Grafana — visualization and alerting solution

- OpenTelemetry — a collection of tools for exporting telemetry data

Immutability

Although not directly mentioned in the 12 factors app methodology, to allow portability of containers between different Kubernetes environments, it is recommended to build a container image from scratch (i.e., avoid using cloud-specific container images), and package the minimum number of binaries and libraries. As previously mentioned, to create an immutable application, you should avoid storing credentials, or any other unique identifiers (including unique configurations) or data inside the container image.

Infrastructure as Code

Deployment of workloads using Infrastructure as Code, as part of a CI/CD pipeline, will allow deploying an entire workload (from pulling the latest container image, backend infrastructure, and Kubernetes deployment process) in a standard way between different cloud providers, and different SDLC stages (Dev, Test, Prod).

Some examples:

- OpenTofu — An open-source fork of Terraform, allows deploying entire cloud environments using Infrastructure as Code

- Helm Charts — An open-source solution for software deployment on top of Kubernetes

Summary

In this blog post, I have reviewed the concept of container portability for allowing organizations the ability to decrease the reliance on cloud provider opinionated solutions, by using open-source solutions.

Not all applications are suitable for portability, and there are many benefits of using cloud-opinionated solutions (such as serverless services), but for simple architectures, it is possible to design for application portability.

About the author

Eyal Estrin is a cloud and information security architect, an AWS Community Builder, and the author of the books Cloud Security Handbook and Security for Cloud Native Applications, with more than 20 years in the IT industry.

You can connect with him on social media (https://linktr.ee/eyalestrin).

Opinions are his own and not the views of his employer.

Stop bringing old practices to the cloud

When organizations migrate to the public cloud, they often mistakenly look at the cloud as “somebody else’s data center”, or “a suitable place to run a disaster recovery site”, hence, bringing old practices to the public cloud.

In the blog, I will review some of the common old (and perhaps bad) practices organizations are still using today in the cloud.

Mistake #1 — The cloud is cheaper

I often hear IT veterans comparing the public cloud to the on-prem data center as a cheaper alternative, due to versatile pricing plans and cost of storage.

In some cases, this may be true, but focusing on specific use cases from a cost perspective is too narrow, and missing the benefits of the public cloud — agility, scale, automation, and managed services.

Don’t get me wrong — cost is an important factor, but it is time to look at things from an efficiency point of view and embed it as part of any architecture decision.

Begin by looking at the business requirements and ask yourself (among others):

- What am I trying to achieve, what capabilities do I need, and then figure out which services will allow you to accomplish your needs?

- Do you need persistent storage? Great. What are your data access patterns? Do you need the data to be available in real time, or can you store data in an archive tier?

- Your system needs to respond to customers’ requests — does your application need to provide a fast response to API calls, or is it ok to provide answers from a caching service, while calls are going through an asynchronous queuing service to fetch data?

Mistake #2 — Using legacy architecture components

Many organizations are still using legacy practices in the public cloud — from moving VMs in a “lift & shift” pattern to cloud environments, using SMB/CIFS file services (such as Amazon FSx for Windows File Server, or Azure Files), deploying databases on VMs and manually maintaining them, etc.

For static and stable legacy applications, the old practices will work, but for how long?

Begin by asking yourself:

- How will your application handle unpredictable loads? Autoscaling is great, but can your application scale down when resources are not needed?

- What value are you getting by maintaining backend services such as storage and databases?

- What value are you getting by continuing to use commercial license database engines? Perhaps it is time to consider using open-source or community-based database engines (such as Amazon RDS for PostgreSQL, Azure Database for MySQL, or OpenSearch) to have wider community support and perhaps be able to minimize migration efforts to another cloud provider in the future.

Mistake #3 — Using traditional development processes

In the old data center, we used to develop monolith applications, having a stuck of components (VMs, databases, and storage) glued together, making it challenging to release new versions/features, upgrade, scale, etc.

The more organizations began embracing the public cloud, the shift to DevOps culture, allowed organizations the ability to develop and deploy new capabilities much faster, using smaller teams, each own specific component, being able to independently release new component versions, and take the benefit of autoscaling capability, responding to real-time load, regardless of other components in the architecture.

Instead of hard-coded, manual configuration files, pruning to human mistakes, it is time to move to modern CI/CD processes. It is time to automate everything that does not require a human decision, handle everything as code (from Infrastructure as Code, Policy as Code, and the actual application’s code), store everything in a central code repository (and later on in a central artifact repository), and be able to control authorization, auditing, roll-back (in case of bugs in code), and fast deployments.

Using CI/CD processes, allows us to minimize changes between different SDLC stages, by using the same code (in code repository) to deploy Dev/Test/Prod environments, by using environment variables to switch between target environments, backend services connection settings, credentials (keys, secrets, etc.), while using the same testing capabilities (such as static code analysis, vulnerable package detection, etc.)

Mistake #4 — Static vs. Dynamic Mindset

Traditional deployment had a static mindset. Applications were packed inside VMs, containing code/configuration, data, and unique characteristics (such as session IDs). In many cases architectures kept a 1:1 correlation between the front-end component, and the backend, meaning, a customer used to log in through a front-end component (such as a load-balancer), a unique session ID was forwarded to the presentation tier, moving to a specific business logic tier, and from there, sometimes to a specific backend database node (in the DB cluster).

Now consider what will happen if the front tier crashes or is irresponsive due to high load. What will happen if a mid-tier or back-end tier is not able to respond on time to a customer’s call? How will such issues impact customers’ experience having to refresh, or completely re-login again?

The cloud offers us a dynamic mindset. Workloads can scale up or down according to load. Workloads may be up and running offering services for a short amount of time, and decommission when not required anymore.

It is time to consider immutability. Store session IDs outside compute nodes (from VMs, containers, and Function-as-a-Service).

Still struggling with patch management? It’s time to create immutable images, and simply replace an entire component with a newer version, instead of having to pet each running compute component.

Use CI/CD processes to package compute components (such as VM or container images). Keep artifacts as small as possible (to decrease deployment and load time).

Regularly scan for outdated components (such as binaries and libraries), and on any development cycle update the base images.

Keep all data outside the images, on a persistent storage — it is time to embrace object storage (suitable for a variety of use cases from logging, data lakes, machine learning, etc.)

Store unique configuration inside environment variables, loaded at deployment/load time (from services such as AWS Systems Manager Parameter Store or Azure App Configuration), and for variables containing sensitive information use secrets management services (such as AWS Secrets Manager, Azure Key Vault, or Google Secret Manager).

Mistake #5 — Old observability mindset

Many organizations migrated workloads to the public cloud, still kept their investment in legacy monitoring solutions (mostly built on top of deployed agents), and shipping logs (from application, performance, security, etc.) from the cloud environment back to on-prem, without considering the cost of egress data from the cloud, or the cost to store vast amounts of logs generated by the various services in the cloud, in many cases still based on static log files, and sometimes even based on legacy protocols (such as Syslog).

It is time to embrace a modern mindset. It is fairly easy to collect logs from various services in the cloud (as a matter of fact, some logs such as audit logs are enabled for 90 days by default).

Time to consider cloud-native services — from SIEM services (such as Microsoft Sentinel or Google Security Operations) to observability services (such as Amazon CloudWatch, Azure Monitor, or Google Cloud Observability), capable of ingesting (almost) infinite amount of events, streaming logs and metrics in near real-time (instead of still using log files), and providing an overview of entire customers service (made out of various compute, network, storage and database services).

Speaking about security — the dynamic nature of cloud environments does not allow us to keep legacy systems scanning configuration and attack surface in long intervals (such as 24 hours or several days) just to find out that our workload is exposed to unauthorized parties, that we made a mistake leaving configuration in a vulnerable state (still deploying resources expose to the public Internet?), or kept our components outdated?

It is time to embrace automation and continuously scan configuration and authorization, and gain actionable insights on what to fix, as soon as possible (and what is vulnerable, but not directly exposed from the Internet, and can be taken care of at lower priority).

Mistake #6 — Failing to embrace cloud-native services

This is often due to a lack of training and knowledge about cloud-native services or capabilities.

Many legacy workloads were built on top of 3-tier architecture since this was the common way most IT/developers knew for many years. Architectures were centralized and monolithic, and organizations had to consider scale, and deploy enough compute resources, many times in advance, failing to predict spikes in traffic/customer requests.

It is time to embrace distributed systems, based on event-driven architectures, using managed services (such as Amazon EventBridge, Azure Event Grid, or Google Eventarc), where the cloud provider takes care of load (i.e., deploying enough back-end compute services), and we can stream events, and be able to read events, without having to worry whether the service will be able to handle the load.

We can’t talk about cloud-native services without mentioning functions (such as AWS Lambda, Azure Functions, or Cloud Run functions). Although functions have their challenges (from vendor opinionated, maximum amount of execution time, cold start, learning curve, etc.), they have so much potential when designing modern applications. To name a few examples where FaaS is suitable we can look at real-time data processing (such as IoT sensor data), GenAI text generation (such as text response for chatbots, providing answers to customers in call centers), video transcoding (such as converting videos to different formats of resolutions), and those are just small number of examples.

Functions can be suitable in a microservice architecture, where for example one microservice can stream logs to a managed Kafka, some microservices can be trigged to functions to run queries against the backend database, and some can store data to a persistent datastore in a fully-managed and serverless database (such as Amazon DynamoDB, Azure Cosmos DB, or Google Spanner).

Mistake #7 — Using old identity and access management practices

No doubt we need to authenticate and authorize every request and keep the principle of least privileged, but how many times we have seen bad malpractices such as storing credentials in code or configuration files? (“It’s just in the Dev environment; we will fix it before moving to Prod…”)

How many times we have seen developers making changes directly on production environments?

In the cloud, IAM is tightly integrated into all services, and some cloud providers (such as the AWS IAM service) allow you to configure fine-grained permissions up to specific resources (for example, allow only users from specific groups, who performed MFA-based authentication, access to specific S3 bucket).

It is time to switch from using static credentials to using temporary credentials or even roles — when an identity requires access to a resource, it will have to authenticate, and its required permissions will be reviewed until temporary (short-lived / time-based) access is granted.

It is time to embrace a zero-trust mindset as part of architecture decisions. Assume identities can come from any place, at any time, and we cannot automatically trust them. Every request needs to be evaluated, authorized, and eventually audited for incident response/troubleshooting purposes.

When a request to access a production environment is raised, we need to embrace break-glass processes, making sure we authorize the right number of permissions (usually for members of the SRE or DevOps team), and permissions will be automatically revoked.

Mistake #8 — Rushing into the cloud with traditional data center knowledge

We should never ignore our team’s knowledge and experience.

Rushing to adopt cloud services, while using old data center knowledge is prone to failure — it will cost the organization a lot of money, and it will most likely be inefficient (in terms of resource usage).

Instead, we should embrace the change, learn how cloud services work, gain hands-on practice (by deploying test labs and playing with the different services in different architectures), and not be afraid to fail and quickly recover.

To succeed in working with cloud services, you should be a generalist. The old mindset of specialty in certain areas (such as networking, operating systems, storage, database, etc.) is not sufficient. You need to practice and gain wide knowledge about how the different services work, how they communicate with each other, what are their limitations, and don’t forget — what are their pricing options when you consider selecting a service for a large-scale production system.

Do not assume traditional data center architectures will be sufficient to handle the load of millions of concurrent customers. The cloud allows you to create modern architectures, and in many cases, there are multiple alternatives for achieving business goals.

Keep learning and searching for better or more efficient ways to design your workload architectures (who knows, maybe in a year or two there will be new services or new capabilities to achieve better results).

Summary

There is no doubt that the public cloud allows us to build and deploy applications for the benefit of our customers while breaking loose from the limitations of the on-prem data center (in terms of automation, scale, infinite resources, and more).

Embrace the change by learning how to use the various services in the cloud, adopt new architecture patterns (such as event-driven architectures and APIs), prefer managed services (to allow you to focus on developing new capabilities for your customers), and do not be afraid to fail — this is the only way you will gain knowledge and experience using the public cloud.

About the author

Eyal Estrin is a cloud and information security architect, an AWS Community Builder, and the author of the books Cloud Security Handbook and Security for Cloud Native Applications, with more than 20 years in the IT industry.

You can connect with him on social media (https://linktr.ee/eyalestrin).

Opinions are his own and not the views of his employer.

My 2025 Wishlist for Public Cloud Providers

Anyone who has been following me on social media knows that I am a huge advocate of the public cloud.

By now, we are just after the biggest cloud conferences — Microsoft Ignite 2024 and AWS re:Invent 2024, and just before the end of 2024.

As we are heading to 2025, I thought it would be interesting to share my wishes from the public cloud providers in the coming year.

Resiliency and Availability

The public cloud has existed for more than a decade, and at least according to the CSPs documentation, it is designed to survive major or global outages impacting customers all over the world.

And yet, in 2024 each of the CSPs had suffered from major outages. To name a few:

- Summary of the Amazon Kinesis Data Streams Service Event in Northern Virginia (US-EAST-1) Region

- Azure Incident Retrospective: Storage issues in Central US

- Incident affecting Cloud Firestore, Google App Engine, Google Cloud Functions

In most cases, the root cause of outages originates from unverified code/configuration changes, or lack of resources due to spike or unexpected use of specific resources.

The result always impacts customers in a specific region, or worse in multiple regions.

Although CSPs implement different regions and AZs to limit the blast radius and decrease the chance of major customer impact, in many cases we realize that critical services have their control plane (the central management system that provides orchestration, configuration, and monitoring capabilities) deployed in a central region (usually in East US data centers), and the blast radius impact customers all over the world.

My wish for 2025 from CSPs — improve the level of testing, and observability, for any code or configuration change (whether done by engineers, or by automated systems).

For the long term, CSPs should find a way to design the service control plane to be synced and spread across multiple regions (at least one copy in each continent), to limit the blast radius of global outages.

Secure by Default

Reading the announcements of new services, and the service official documentation, we can learn the CSPs understand the importance of “secure by default”, i.e., enabling a service or capability, where security configuration was designed from day 1.

And yet, in 2024 each of the CSPs had suffered from security incidents resulting from a misconfiguration. To name a few:

- AWS Security Bulletin AWS-2024–003

- Microsoft Power Pages: Data Exposure Reviewed

- Exploring Google Cloud Default Service Accounts: Deep Dive and Real-World Adoption Trends

It is always best practice to read the vendor’s documentation, and understand the default settings or behavior of every service or capability we are enabling, however, following the shared responsibility model, as customers, we expect the CSPs to design everything secured by default.

I understand that some CSPs’ product groups have an agenda for releasing new services to the market as quickly as possible, allowing customers to experience and adopt new capabilities, but security must be job zero.

My wish for 2025 from CSPs is to put security higher in your priorities — this is relevant for both the product groups and the development teams of each product.

Invest in threat modeling, from the design phase until each service/capability is deployed to production, and try to anticipate what could go wrong.

Choose secure/close by default (and provide documentation to allow customers to choose if they wish to change the default settings), instead of keeping services exposed, which forces customers to make changes after the fact, after their data was already exposed to unauthorized parties).

Service Retirements

I understand that from time to time a product group, or even the business of a CSP reviews the list of currently available services and decides to retire a service, leaving their customers with no alternative or migration path.

In 2024 we saw several publications of service retirements. To name a few:

- AWS to discontinue Cloud9, CodeCommit, CloudSearch, and several other services

- Azure Media Services retirement guide

- Google Cloud Platform (GCP) has announced the end-of-sale for Cloud Source Repositories

The leader of service retirement/deprecation is GCP, followed by Azure.

In some cases, customers receive (short) notice, asking them to migrate their data and find an alternate solution, but from a customer point of view, it does not look good (to be politically correct), that the services that we have been using for a while are now stopped working and we need to find alternate solutions for production environments.

Although AWS service was far from being ideal while decommissioning services such as Cloud9, and Code Commit, their approach is different from the rest of the cloud providers, with their working backwards development methodology.

My wish for 2025 from CSPs is to put customers first and do market research before head. Check with your customers what capabilities are they looking for, before beginning the development of a new service.

Even if the market changes over time, remember that you have production customers using your services. Prepare alternatives in advance and a documented migration path to those alternatives. Do everything you can to support services for a very long time, and if there is no other alternative, keep supporting your services, even with no new capabilities, but at least your customers will know that in case of production issues, or discovered security vulnerabilities, they will have support and an SLA.

Cost and Economics of Scale

When organizations began migrating their on-prem workloads to the public cloud, the notion was that due to economics of scale, the CSPs would be able to offer their customers cost-effective alternatives for consuming services and infrastructure, compared to the traditional data centers.

Many customers got the equation wrong, trying to compare the cost of hardware (such as VMs and storage) between their data center, and the public cloud alternative, without adding to the equation the cost of maintenance, licensing, manpower, etc., and the result was a higher cost for “lift & shift” migrations in the public cloud. In the long run, after a decade of organizations working with the public cloud, the alternative of re-architecture provides much better and cost-effective results.

Although we have not seen documented publications of CSPs announcing an increase in service costs, there are cases that from a customer’s point of view simply do not make sense.

A good example is egress data cost. If all CSPs do not charge customers for ingress data costs, there is no reason to charge for egress data costs. It is the same hardware, so I really cannot understand the logic in high (or any) charges of egress data. Customers should have the option to pull data from their cloud accounts (sometimes to keep data on-prem in hybrid environments, and sometimes to allow migration to other CSPs), without being charged.

The same rule applies to inter-zone traffic charges (see AWS and GCP documentation), or to enabling private traffic inside the CSPs backbone (see AWS, Azure, and GCP documentation).

My wish for 2025 from CSPs is to put customers first. CSPs are already encouraging customers to build highly-available infrastructure spanned across multiple AZs, and encouraging customers to keep the services that support customers’ data private (and not exposed to the public Internet). Although the public cloud is a business that wishes to gain revenue, CSPs should think about their customers, and offer them more capabilities, but at lower prices, to make the public cloud the better and cost-effective alternative to the traditional data centers.

Vendor Lock-In

This was a challenge from the initial days of the public cloud. Each CSP offered its alternative and list of services, with different capabilities, and naturally different APIs.

From an architectural point of view, customers should first understand the business demands, before choosing a technology (or specific services from a specific CSP).

Each CSP offers its services, and it does not mean it has to be a negative thing — if in doubt, I highly recommend you to watch the lecture “Do modern cloud applications lock you in?” by Gregor Hohpe, from AWS re:Invent 2023.

In the past, there was the notion that packaging our applications inside containers and perhaps using Kubernetes (in its various managed alternatives), would enable customers to switch between cloud providers or deploy highly-available workloads on top of multiple CSPs. This notion was found to be false since containers do not leave in a vacuum, and customers do not pack their entire application inside a single container/microservice. Cloud-native applications are deployed inside a cloud eco-system, and consume data from other services such as storage, networking, databases, message queuing, etc., so trying to migrate between CSPs will still require a lot of effort connecting to different sets of APIs.

My wish for 2025 from CSPs, and I know it is a lot to ask, but could you invest in standardization of your APIs?

Instead of customers having to add abstraction layers on top of cloud services, forcing them to choose the lower common denominator, why not offer the same APIs, and hopefully the same (or mostly the same) capabilities?

If we look at Kubernetes, and its CSI Storage, as two examples — they allow customers to consume container orchestration and backend storage using similar APIs, and they are both supported by the CNCF, which allows customers an easy alternative to deploy and maintain cloud resources, even on top of different CSPs.

Summary

There are a lot more things I wish Santa Claus could bring me in 2025, but as it relates to the public cloud, I truly wish each of the CSPs product group could read my blog post and begin making the required changes to allow customers better experience in all the areas that I have mentioned in my blog post.

For the readers in the audience, feel free to contact me on social media, and share with me your thoughts about this blog post.

About the author

Eyal Estrin is a cloud and information security architect, an AWS Community Builder, and the author of the books Cloud Security Handbook and Security for Cloud Native Applications, with more than 20 years in the IT industry.

You can connect with him on social media (https://linktr.ee/eyalestrin).

Opinions are his own and not the views of his employer.

Qualities of a Good Cloud Architect

In 2020 I published a blog post called “What makes a good cloud architect?“, where I tried to lay out some of the main qualities required to become a good cloud architect.

Four years later, I still believe most of the qualities are crucial, but I would like to focus on what I believe today is critical to succeed as a cloud architect.

Be able to see the bigger picture

A good cloud architect must be able to see the bigger picture.

Before digging into details, an architect must be able to understand what the business is trying to achieve (from a chatbot, e-commerce mobile app, a reporting system for business analytics, etc.)

Next, it is important to understand technological constraints (such as service cost, resiliency, data residency, service/quota limits, etc.)

A good architect will be able to translate business requirements, together with technology constraints, into architecture.

Being multi-lingual

An architect should be able to speak multiple languages – speak to business decision-makers understand their goals, and be able to translate it to technical teams (such as developers, DevOps, IT, engineers, etc.)

The business never comes with a requirement “We would like to expose an API to end-customers”. They will probably say “We would like to provide customers valuable information about investments” or “Provide patients with insights about their health”.

Being multi-disciplinary

There is always the debate between someone who is a specialist in a certain area (from being an expert in a specific cloud provider’s eco-system or being an expert in specific technology such as Containers or Serverless) and someone who is a generalist (having broad knowledge about cloud technology from multiple cloud providers).

I am always in favor of being a generalist, having hands-on experience working with multiple services from multiple cloud providers, knowing the pros and cons of each service, making it easier to later decide on the technology and services to implement as part of an architecture.

Being able to understand modern technologies

The days of architectures based on VMs are almost gone.

A good cloud architect will be able to understand what an application is trying to achieve, and be able to embed modern technologies:

- Microservice architecture, to split a complex workload into small pieces, developed and owned by different teams

- Containerization solutions, from managed Kubernetes services to simpler alternatives such as Amazon ECS, Azure Container Apps, or Google Cloud Run

- Function-as-a-Service, been able to process specific tasks such as image processing, handling user registration, error handling, and much more.

Note: Although FaaS is considered vendor-opinionated, and there is no clear process to migrate between cloud providers, once decided on a specific CSP, a good architect should be able to find the pros for using FaaS as part of an application architecture.

- Event-driven architecture has many benefits in modern applications, from decoupling complex architecture, the ability for different components to operate independently, the ability to scale specific components (according to customers’ demand) without impacting other components of the application, and more.

Microservices, Containers, or FaaS does not have to be the answer for every architecture, but a good cloud architect will be able to find the right tools to achieve the business goals, sometimes by combining different technologies.

We must remember that technology and architecture change and evolve. A good cloud architect should reassess past architecture decisions, to see if, over time, different architecture can provide better results (in terms of cost, security, resiliency, etc.)

Understanding cloud vs. on-prem

As much as I admire organizations that can design, build, and deploy production-scale applications in the public cloud, I admit the public cloud is not a solution for 100% of the use cases.

A good cloud architect will be able to understand the business goals, with technological constraints (such as cost, resiliency requirements, regulations, team knowledge, etc.), and be able to understand which workloads can be developed as cloud-native applications, and which workloads can remain, or even developed from scratch on-prem.

I do believe that to gain the full benefits of modern technologies (from elasticity, infinite scale, use of GenAI technology, etc.) an organization should select the public cloud, but for simple or stable workloads, an organization can find suitable solutions on-prem as well.

Thoughts of Experienced Architects

Make unbiased decisions

“A good architecture allows major decisions to be deferred (to a time when you have more information). A good architecture maximizes the number of decisions that are not made. A good architecture makes the choice of tools (database, frameworks, etc.) irrelevant.”

Source: Allen Holub

Beware the Assumptions

“Unconscious decisions often come in the form of assumptions. Assumptions are risky because they lead to non-requirements, those requirements that exist but were not documented anywhere. Tacit assumptions and unconscious decisions both lead to missed expectations or surprises down the road.”

Source: Gregor Hohpe

Cloud building blocks – putting things together

“A cloud architect is a system architect responsible for putting together all the building blocks of a system to make an operating application. This includes understanding networking, network protocols, server management, security, scaling, deployment pipelines, and secrets management. They must understand what it takes to keep systems operational.”

Source: Lee Atchison

Being a generalist

“Good generalists need to cast a wider net to define the best-optimized technologies and configurations for the desired business solution. This means understanding the capabilities of all cloud services and the trade-offs of deploying a heterogeneous cloud solution.”

Source: David Linthicum

The importance of cost considerations

“By considering cost implications early and continuously, systems can be designed to balance features, time-to-market, and efficiency. Development can focus on maintaining lean and efficient code. And operations can fine-tune resource usage and spending to maximize profitability.”

Source: Dr. Werner Vogels

Summary

There are many more qualities of a good and successful cloud architect (from understanding cost decisions, cybersecurity threats and mitigations, designing for scalability, high availability, resiliency, and more), but in this blog post, I have tried to mention the qualities that in 2024 I believe are the most important ones.

Whether you just entered the role of a cloud architect, or if you are an experienced cloud architect, I recommend you keep learning, gain hands-on experience with cloud services and the latest technologies, and share your knowledge with your colleagues, for the benefit of the entire industry.

About the author

Eyal Estrin is a cloud and information security architect, and the author of the books Cloud Security Handbook and Security for Cloud Native Applications, with more than 20 years in the IT industry.

You can connect with him on social media (https://linktr.ee/eyalestrin).

Opinions are his own and not the views of his employer.

Unpopular opinion about “Moving back to on-prem”

Over the past year, I have seen a lot of posts on social media about organizations moving back from the public cloud to on-prem.

In this blog post, I will explain why I believe it is nothing more than a myth, and why the public cloud is the future.

Introduction

Anyone who follows my posts on social media knows that I am a huge advocate of the public cloud.

Back in 2023, I published a blog post called “How to Avoid Cloud Repatriation“, where I explained why I believe that organizations rushed to the public cloud, without having a clear strategy, that would guide them on which workloads are suitable to run in the public cloud, to invest in cost management and employee training, etc.

I am aware of Barclay’s report from mid-2024 claiming that according to conversations they had with CIOs, 83% of the surveyed organizations plan to move workloads back to private cloud, while another report from Synergy Research Group (published in August 2024), claiming that “hyper-scale operators are 41% of the worldwide capacity of all data centers”, and “Looking ahead to 2029, hyper-scale operators will account for over 60% of all capacity, while on-premises will drop to just 20%”.

Analysts claim there is a trend of organizations to move back to on-prem, but the newspapers are far from been filled with customer stories (specifically enterprises), who moved their production workloads from the public cloud to the on-prem.

You may be able to find some stories about small companies (with stable workloads and highly skilled personnel), who decided to move back to on-prem, but it is far from being a trend.

I do not disagree that large workloads in the public cloud will cost an organization a lot of money, but it raises a question:

Has the organization embedded cost as part of any architecture decision from day 1, or has the organization ignored cost for a long time and realized now that the usage of cloud resources costs a lot of money if not managed properly?

Why do I believe the future is in the public cloud?

I am not looking at the public cloud as a solution for all IT questions/issues.

As with any (kind of) new field, an organization must invest in learning the topic from the bottom up, consult with experts, create a cloud strategy, and invest in cost, security, sustainability, and employee training, to be able to get the full benefits of the public cloud.

Let us dig deeper into some of the main areas where we see benefits of the public cloud:

Scalability

One of the huge benefits of the public cloud is the ability to scale horizontally (i.e., add or remove compute, storage, or network resources according to customer demand).

Were you able to horizontally scale using the traditional virtualization on-prem? Yes.

Did you have the capacity to scale virtually unlimited? No. Organizations are always limited by the amount of hardware they purchase and deploy in their on-prem data center.

Data center management

Regardless of what people may believe, most organizations do not have the experience of building and maintaining data centers to be physically secured, energetic sustainable, and to be CSP grade highly available.

Data centers do not produce any business value (unless you are in the data center or hosting industry), and in most cases, moving the responsibility to a cloud provider will be more beneficial for most organizations.

Hardware maintenance

Let us assume your organization decided to purchase expensive hardware for their SAP HANA cluster, or an NVIDIA cluster with the latest GPUs for AI/ML workloads.

In this scenario, your organization will need to pay in advance for several years, train your IT on deployment and maintenance of the purchased hardware (do not forget the cooling of GPUs…), and the moment you complete deploying the new hardware, your organization is in charge of the on-going maintenance, until the hardware will become outdated (probably couple of weeks/months after you purchased the hardware), and not you are stacked with old hardware, that will not be able to suit your business needs (such as the latest GenAI LLMs).

In the public cloud, you pay for the resources that you need, scale as needed, and pay only for the resources being used (unless you decide to go for Spot, or savings plans, to lower the total costs).

Using or experimenting with new technology

In the traditional data center, we are stacked with a static data center mentality, i.e., use what you currently have.

One of the greatest capabilities the public cloud offers us is switching to a dynamic mindset. Business managers would like their organizations to provide new services to their customers, in a short time-to-market.

A new mindset encourages experimentation, allowing development teams to build new products, experiment with them, and if the experiment fails, switch to something else.

One of the examples of experimentation is the spiky usage of GenAI technology. Suddenly everyone is using (or planning to use) LLMs to build solutions (from chatbots, through text summarization, and image or video generation).

Only the public cloud will allow organizations to experiment with the latest hardware and the latest LLMs for building GenAI applications.

If you try to experiment with GenAI, you will have to purchase dedicated hardware (which will soon get outdated and will not be sufficient for your business needs for a long time), and you will suffer from resource limitations (at least when using the latest LLMs).

Storage capacity

In the traditional data center, organizations (almost) always suffer from limited storage capacity.

The more organizations collect data (for business analytics, providing customers added-value, research, AI/ML, etc.), to more data will be produced and needs to be stored.

In the on-prem, you are eventually limited with the amount of storage you can purchase and physically deploy in your data center.

Once organizations (usually large enterprises), store PBs of data in the public cloud, the cost and time to move such amounts of data out of the public cloud to on-prem (or even to another cloud provider), will be so high, that eventually, most organizations will keep their data as is, and it will become a hard decision to move out of their existing cloud provider.

Modern / Cloud-native applications

Building modern applications changes the way organizations develop and deploy new applications.

Most businesses would like to move faster and provide new solutions to their customers.

Although you could develop new applications based on Kubernetes on-prem, the cost and complexity of managing the control plane, and the limited scale capabilities, will make your solution a wannabe cloud. A small and pale version of the public cloud.

You could find Terraform/OpenTofu providers for some of the resources that exist on-prem (mostly for the legacy virtualization), but how do you implement infrastructure-as-code (not to mention policy-as-code) in legacy systems? How will you benefit from automated system deployment capabilities?

Conversation about data residency/data sovereignty

This is a hot topic, at least since the GDPR in the EU became effective in 2018.

Today most public cloud providers have regions in most (if not all) countries with data regulation laws.

Not to mention that 85-90 percent of all IaaS/PaaS solutions are regional, meaning, the CSP will not transfer your data from the EU to the US unless you specifically design your workloads accordingly (due to egress data cost, and service built-in limitations).

If you want to add an extra layer of assurance, choose cloud services that allow you to encrypt your data using customer-managed keys (i.e., keys that the customer controls the key generation and rotation process).

Summary

I am sure we can continue and deep dive into the benefits of the public cloud vs. the limitations of the on-prem data center (or what people sometimes refer to as “private cloud”).

For the foreseen future (and I am not saying this as something beneficial), we will continue to see hybrid clouds, while more and more organizations will see the benefits of the public cloud and migrate their production workloads and data to the public cloud.

We will continue to find scenarios where the on-prem and legacy applications will continue to provide value for organizations, but as technology evolves (see GenAI for example), we will see more and more organizations consuming public cloud services.

To gain the full benefit of the public cloud, organizations need to understand how the public cloud can support their business, allowing them to focus on what matters (such as developing new services for their customers), and lower the work on data center maintenance.

Organizations should not neglect cost, security, sustainability, and employee training, to be able to gain the full benefit of the public cloud.

I strongly believe that the public cloud is the future, for developing and innovative solutions, while shipping the hardware and data center responsibility for companies who specialize in this field.

Why do I call it an “unpopular opinion”? When people are reluctant to change, they rather stick with what they know and are familiar with. Change can be challenging, but if organizations embrace the change, look strategically into the future, embed cost into their decisions, and invest in employee training, they will be able to adapt to the change and see its benefits.

About the author

Eyal Estrin is a cloud and information security architect, and the author of the books Cloud Security Handbook and Security for Cloud Native Applications, with more than 20 years in the IT industry.

You can connect with him on social media (https://linktr.ee/eyalestrin).

Opinions are his own and not the views of his employer.

Comparison of Serverless Development and Hosting Platforms

When designing solutions in the cloud, there is (almost) always more than one alternative for achieving the same goal.

One of the characteristics of cloud-native applications is the ability to have an automated development process (such as the use of CI/CD pipelines).

In this blog post, I will compare serverless solutions for developing and hosting web and mobile applications in the cloud.

Why choose a serverless solution?

From a developer’s point of view, there is (almost) no value in maintaining infrastructure – the whole purpose is to enable developers to write new applications/features and provide value to the company’s customers.

Serverless platforms allow us to focus on developing new applications for our customers, without the burden of maintaining the lower layers of the infrastructure, i.e., virtual machine scale, patch management, host machine configuration, and more.

Serverless development and hosting platforms allow us CI/CD workflow, from Git repository to the build stage, and finally deployment to the various application stages (Dev, Test, Prod), in a single solution (Git repos is still outside the scope of such services).

Serverless development and hosting platforms allow us to deploy fully functional applications at any scale – from a small test environment to a large-scale production application, which we can put behind a content delivery network (CDN), and a WAF, and be accessible for external or internal customers.

Serverless development platform workflow

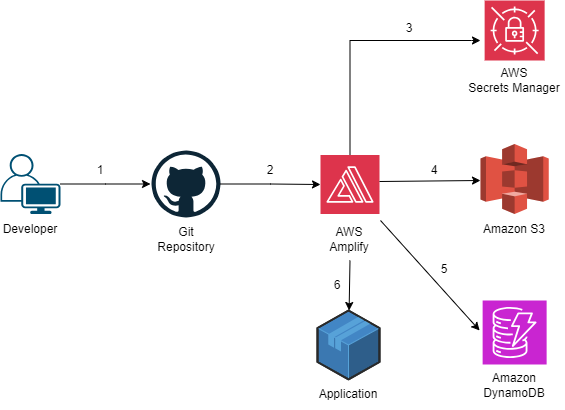

Below is a sample workflow for developing and deploying an application based on a Serverless platform:

- A developer writes code and pushes the code to a Git repository

- A new application is configured using AWS Amplify, based on the code from the Git repository

- The AWS Amplify pulls secrets from AWS Secrets Manager to connect to AWS resources

- The new application is configured to connect to Amazon S3 for uploading static content

- The new application is configured to connect to Amazon DynamoDB for storing and retrieving data

- The new application has been deployed using AWS Amplify

Note: The example below is based on AWS services but can be configured similarly to other cloud platforms mentioned in this blog post.

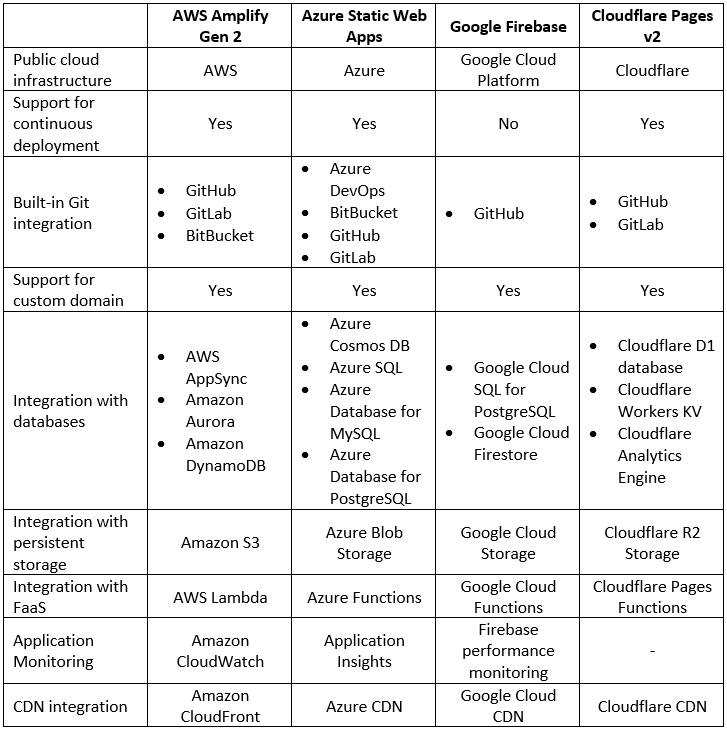

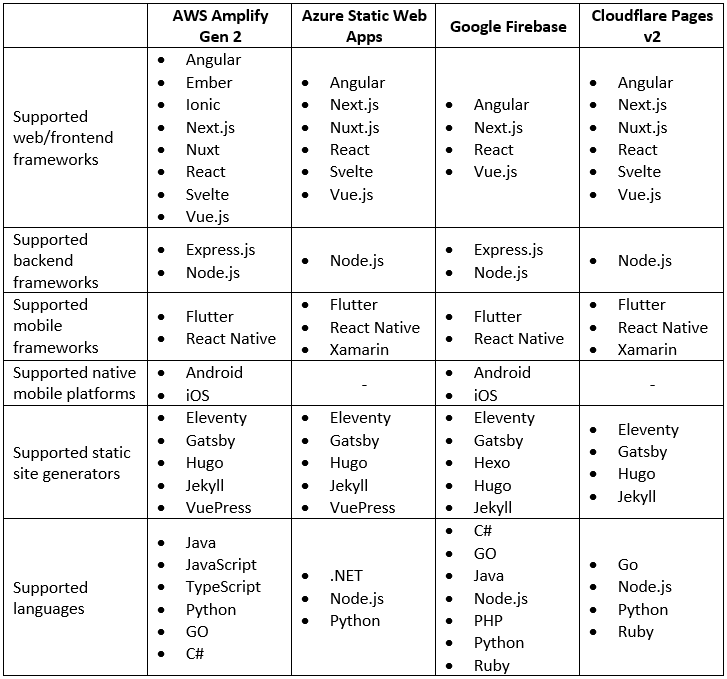

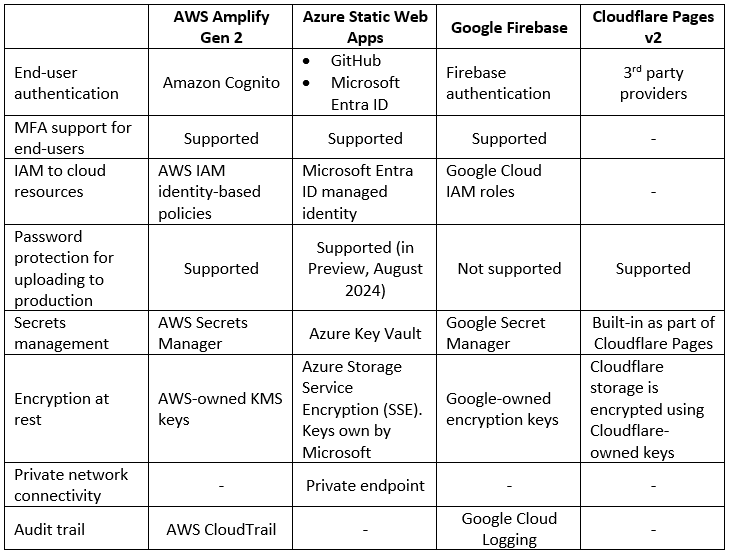

Service Comparison